The challenges of image recognition

When you asked a computer scientist more than a decade ago to make a computer recognize a seagull on a busy beach, he or she would have a good laugh. How one could automatically classify individual objects within an image was far from straightforward, both from an algorithmic, as from a computational point of view.

In 2021, the same task could be an assignment in any computer scientist bachelor course. With the help of deep learning, better digital cameras, pre-trained networks and datasets and especially specialized computing devices such as GPUs and TPUs, we have really come a long way ( and in a very short time). What is even more intriguing is that all this technology is already implemented in many devices and workflows. Want to find all your dog photos on your phone? Simply type dog in the search bar, your phone will show you all the images with a dog in it. Try it yourself!

This classification of objects can also be done in real-time. You might have already seen object detection algorithms drawing rectangular bounding boxes around them, and determining the class of each detected object. Object detection applications are used in many different industries and especially have the potential to automate inspection tasks. We talked about this in our previous blog post, ‘How you can automate inspection tasks with modern computer vision‘.

But despite the impressive progress computer vision made in the last couple of years, it can still be challenging to start using this technology. In this blog post, we will share some tips and tricks to get started successfully.

How to choose your camera?

Technical characteristics of the camera

When choosing a camera for machine vision, there are several important factors to take into account. Keep in mind that this is the device that will generate all data, so it is important to take ample time to pick the right camera for the right task. Remember garbage in-garbage out, no matter how advanced your algorithms further down the line are.

Now, picking the right camera can be quite overwhelming, as many vendors are offering a plethora of different options. Pricing is not always clear, so comparing one-on-one is not straightforward.

A Monochrome USB 3.1 Machine Vision Camera with CMOS sensor

A good way to start is to think about what exactly your camera needs to detect and in which conditions. A normal RGB camera is useless to detect during the dark night and a hyperspectral camera to detect faces might also not be the most cost-efficient idea.

Next, think about the operation mode. How many times per second do we need data from the camera and what does it need to output? E.g. in conveyor belt situations, specialized line scan camera’s can certainly have their merit.

Finally, what is the amount of data we need to process and where? This is definitely one of the main questions that determine your camera choice. Nowadays, computer vision camera’s can come with integrated specialized hardware and algorithms. If you would like some more flexibility or require additional custom algorithms, you could integrate your camera on an Nvidia Jetson board with some GPU power for a solution on the edge. Alternatively in settings where you only need to process a couple of images per hour, a ‘normal’ camera that uploads images to the cloud for processing can be the best option.

What is the level of detail required to detect the content?

In the previous paragraph, we avoided the elephant in the room: what level of detail do we need? The more powerful the camera, the clearer the images will be or maybe they can even compensate for poor lighting or other conditions. The quality of the image is often expressed as the image resolution. The higher the number of megapixels, the sharper and more precise the image will be. This however is not an absolute truth, especially in the higher ranges: more megapixels doesn’t always give you a clearer image. However, more pixels always give you a lot more computing you need to do, so be careful not to over-dimension.

To get started, try to make an estimated guess on which kind of quality you need for the case at hand. Keep in mind that if you cannot see it in the picture, most likely the computer vision algorithm will also struggle. A good idea is to take a slightly better camera than the one you think you need to develop a prototype or do the first tests. (At least, if your computational resources can handle it). After all, the algorithmic development is not simple and in this phase, you want to be sure that it’s not your camera that is holding you back. You can always experiment with lower-cost camera’s after you got something working before deciding on the exact one you will use to roll out your solution.

The higher the image resolution, the more details are visible.

Of course, the resolution is not everything. In a video, business scenario’s the amount of frames that the camera can output is equally important.

Don’t forget about the sensor

An increasing resolution has been the trend in the camera sensor market. However, it’s important to know that sensor size is more important. The sensor size is the amount of physical sensor area that’s exposed to light for image acquisition. So here, size does matter as the more the area the more the sensitivity to light. The choice of camera sensor size depends really on the application.

The sensor size chosen also needs to be matched with the correct lens. Lenses for larger sensor sizes tend to be more expensive but will be well worth the extra cost to take advantage of the full sensor area. So make sure you choose appropriately given the application and camera choice.

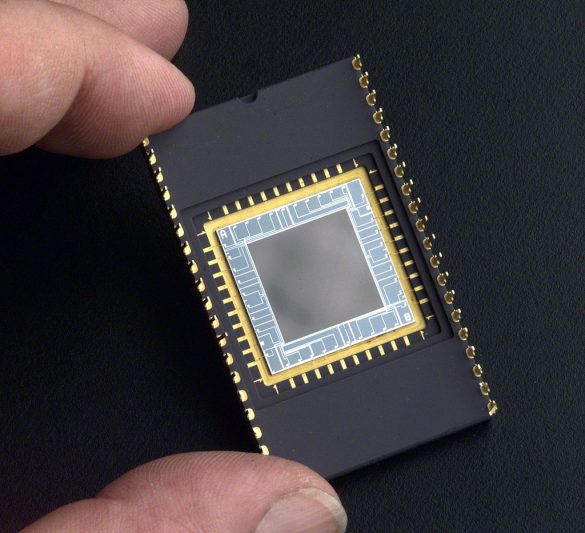

There are four types of image sensors: CCD sensors, CMOS sensors, microbolometers and Focal Plan Array (FPA) sensors. To find the right sensor, it is important to define ahead of time the inspection spectrum you will need. For example, a CCD sensor cannot use infrared imaging. Your choice will also be determined by the quality you are looking for and the budget you have.

An example of a CCD sensor

Finally, do not forget the importance of the lenses attached to the camera. Their optical quality, maximum aperture and focal length directly affect the quality of the images formed on the sensor.

One final tip before we move away from the hardware and start talking about software: companies offering specialized computer vision camera’s will most likely offer their help in picking the right camera. Of course, they have some commercial bias in their decision making but it can still be helpful in this fast-changing sector.

Setup choices and conditions that impact image recognition

Let us talk a little bit more about the challenges of the operation of object detection itself. In our previous blog post, ‘it’s raining cats and dogs’ we explain how image recognition is one of the biggest success stories of machine learning technology and how it’s possible with the help of deep learning. But there are still challenges for computers to always accurately recognize the trained image. For the sake of simplicity, our example will be a can of Coca-Cola. Let’s hope Ronaldo doesn’t leave a thumbs-down!

What viewpoint is the best?

Object viewed from different angles may look completely different. For example, the objects can differ from each other because they show the object from different sides. If you want to detect certain objects: ask yourself the question if you can control the viewpoint? If not, know that your algorithm will require data from different viewpoints before it will be able to generalize to all potential angles. If you can, be sure to position your camera such that the task becomes easier.

Deformation or in general: variations of the same

Objects are not always solid things but can also deform and change their shapes and colours. This provides additional complexity to the object detection processing.

If you look at the can that is deformed, the computer would likely not detect the can if it was trained to detect a full and untouched one.

This broader concept applies to many things we would like to detect. E.g. cats and dogs come in many shapes and sizes. In your data collection process, make sure there is diversity and avoid strong imbalances. In addition, consider using data augmentation to generate realistic variants in cases where you can.

You can’t detect what you can’t see

Sometimes objects can be obscured by other things. There is really no algorithm that can help you there. Having multiple camera’s or using information from multiple images in time are the only options you can consider.

Illumination conditions

Lightning is one of the most important things in image processing. An object will look different depending on the lighting conditions. A can with good lightning conditions will have much more details and objects visible than one that is underexposed.

From our experience, we know that poor attention to lighting conditions is one of the major reasons why computer vision algorithms tend to perform poorly. If you can: control the lightning conditions as much as possible. Introduce artificial lightning, avoid shadows, glares and reflections.

If you can’t control certain effects: be sure to include them in your dataset. A weed detection algorithm trained on a rainy and dark evening in winter will likely struggle to detect the same plant on a hot and sunny summer day.

Speed

Items can move at different speeds on the camera. Consider a camera attached to a drone. Fast speeds might blur or stretch objects. Moving at high speeds also causes the environment to change rapidly. A good example of this is a self-driving car. Here, the object detection algorithms must accurately classify important objects but also be incredibly fast during prediction to be able to identify objects that are in motion. Or at the production belt that you can see here. Be sure your processing and camera can keep up. Once you start dropping frames, it can be hard to recover.